Before: Strong Product, Zero AI Presence

When the search behaviour changed and users started asking ChatGPT, Perplexity, or Google AI “what’s the best cheap VPS for developers?” — Hetzner wasn’t in the answer. Competitors with larger marketing budgets dominated. The product was arguably better. AI didn’t know that.

The gap was structural:

- No authoritative PR placements in English-language tech media

- No presence in Reddit threads where developers actually make hosting decisions

- No content written by practitioners solving real problems

- Documentation existed but wasn’t structured to be LLM-friendly

- Organic traffic was almost entirely branded — the brand existed, but had no topical authority

The visibility problem wasn’t bad SEO. It was that LLMs form recommendations from community consensus, structured citations, and authoritative content. Hetzner had none of those signals in place at scale.

What Hetzner Did — Specific Methodology

1) Community Content Engine

Hetzner launched community.hetzner.com — external contributors submit practical tutorials and get paid up to €50 per accepted post, reviewed via an open GitHub repo.

- 375+ tutorials covering Kubernetes, LLM deployment, Terraform, CI/CD, security

- Written by practitioners solving real problems, with working code

- Each post is crawlable, linkable, and feeds directly into LLM training corpora

- The GitHub repo (

hetzneronline/community-content) is indexed by LLMs as a primary source

Why it works: each tutorial earns its own backlinks, gets cited in forums, and gives LLMs a standalone answer to reference. One program, thousands of downstream signals.

2) AI-Specific Content Cluster

Hetzner built content directly answering the queries LLMs receive most — and backed it with product.

- Tutorials: “Deploy a Private AI Chat with Ollama on a GPU Server”, “Hosting LLMs on Hetzner GEX44”

- Launched GPU servers for AI workloads in Sep 2024 — triggered organic coverage across dev platforms

- Partnership with SUPA.works for serverless LLM hosting added a second layer of AI-specific citations

- Direct result: Grok citations grew from 0 → 138 in the period following this content push

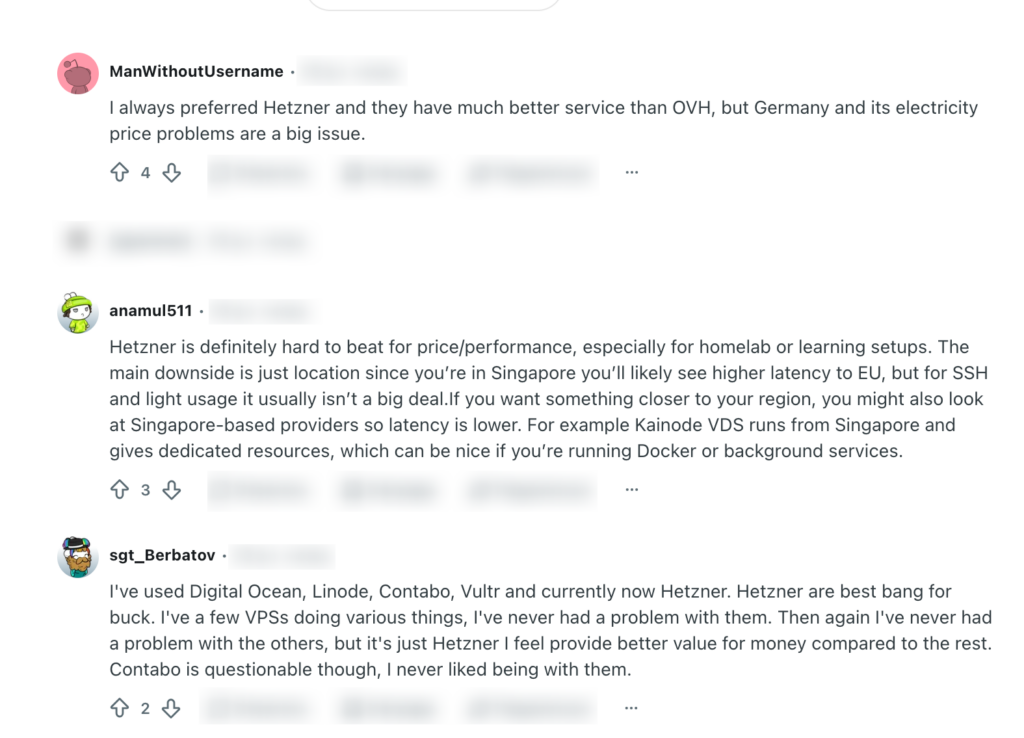

3) Reddit & Developer Forum Presence

Hetzner built a dominant organic presence in the communities where developers actually make hosting decisions — with no paid placements.

- Active in r/selfhosted, r/webdev, r/devops, r/homelab, r/sysadmin

- Experience-based tone, never promotional — passes Reddit’s “marketing smell” filter

- Thousands of threads where real users recommend Hetzner unprompted

Reddit is the #2 most-cited source in ChatGPT responses (1.8% of all citations, after Wikipedia). Organic developer consensus here cannot be replicated by any campaign.

Did it work? Definitely. Organic community consensus is the one signal that can’t be manufactured quickly — and it’s the signal LLMs weight most.

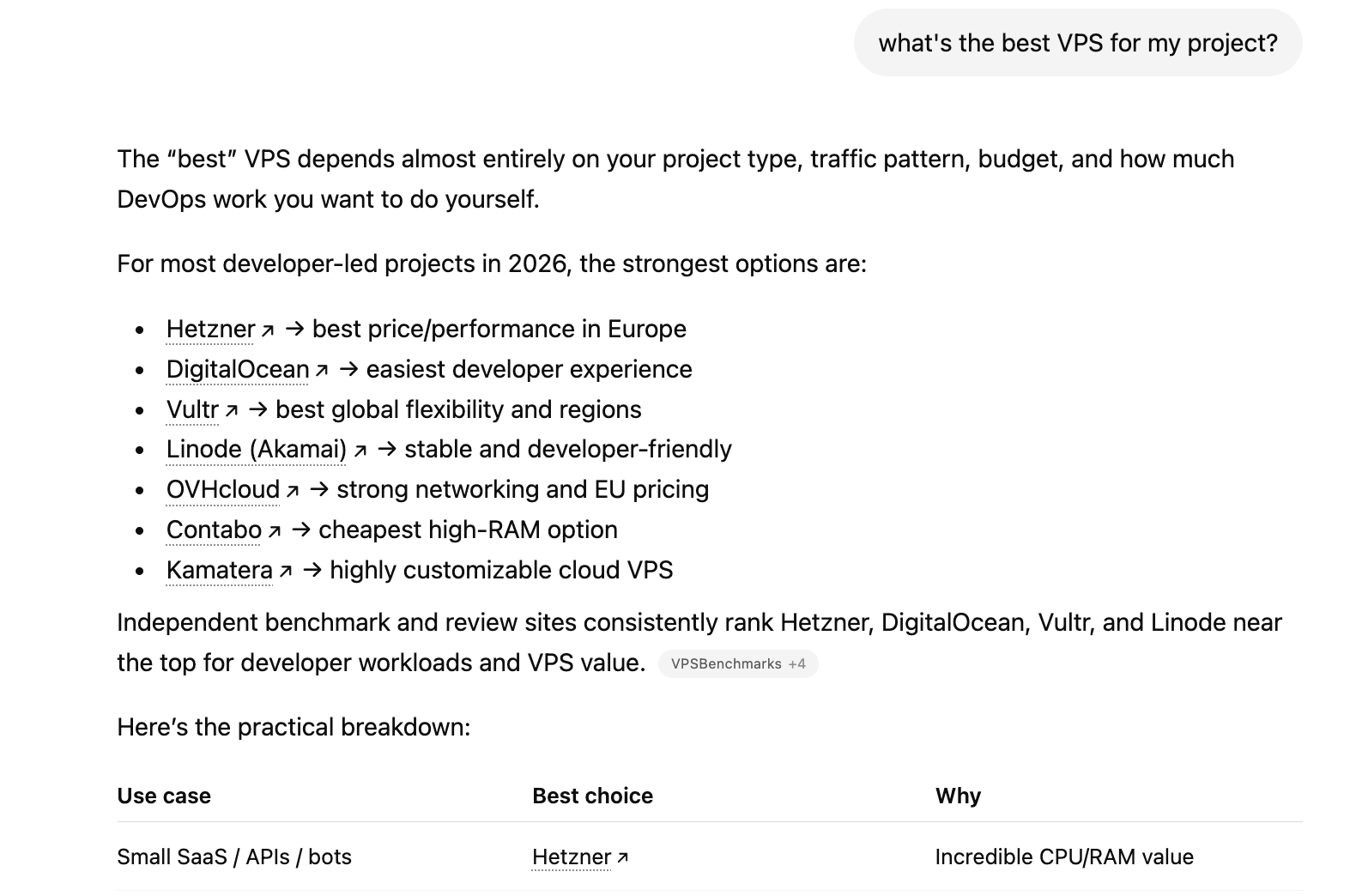

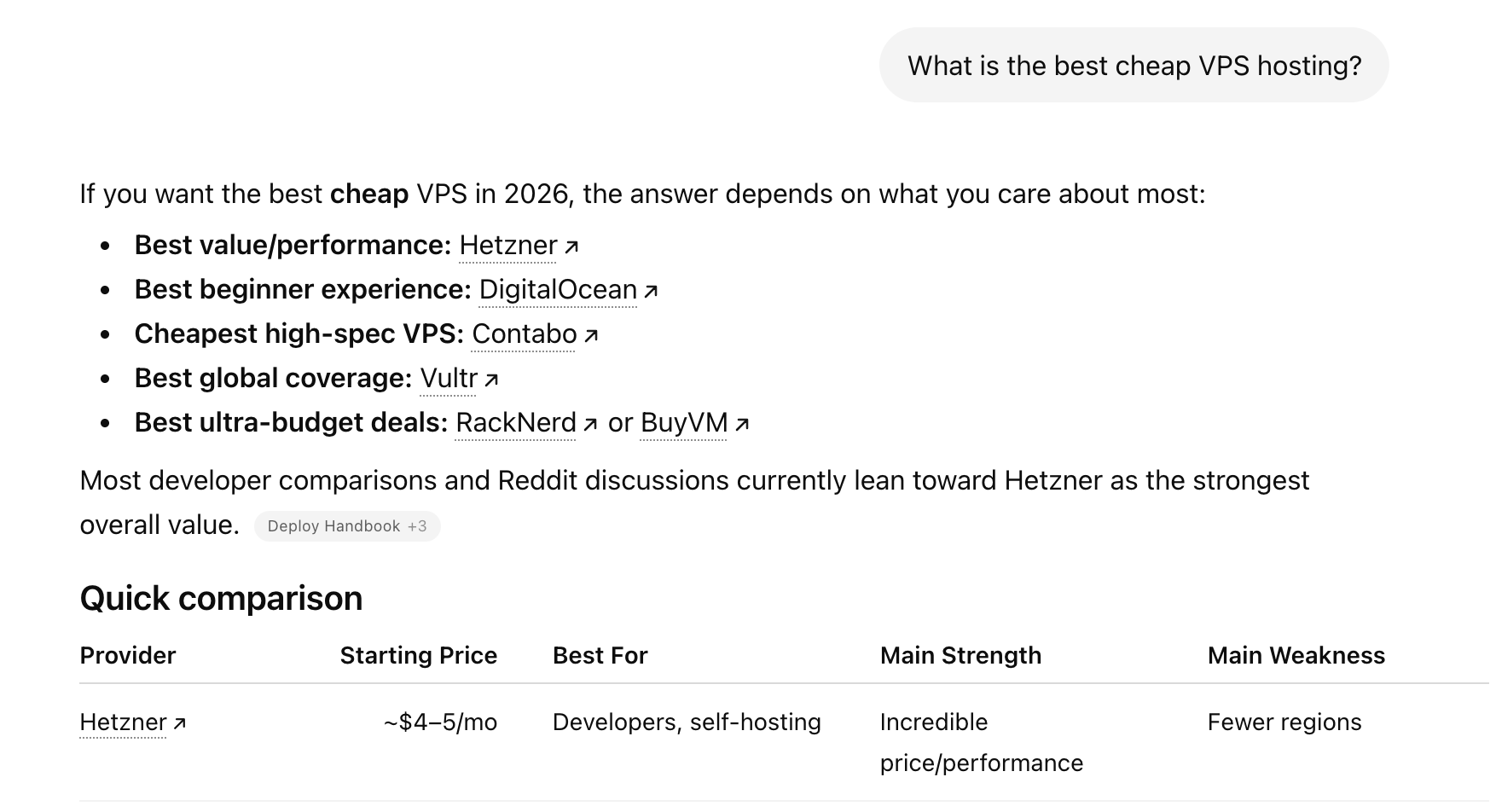

4) Listicle Ecosystem & AI-Optimized Comparison Content

Hetzner built dense presence in the exact content format LLMs pull from most when answering commercial queries — comparison articles and ranked lists.

- Appears in 50+ listicles across VPSBenchmarks, HostAdvice, dev.to, Kuberns, WebsitePlanet

- “2nd Best VPS 2024 under $15” — VPSBenchmarks

- “Best ChatGPT VPS Hosting Providers” — HostAdvice (2026)

- Competitors publish “Best Hetzner Alternatives” (DigitalOcean, Kuberns, Akamai) — each confirms Hetzner as the category benchmark and adds a referring domain

Listicles and comparison articles account for 21.9% of all LLM citations — the single most-cited content type across ChatGPT, Perplexity, and AI Mode. When developers ask an LLM “what’s the best VPS,” the model synthesizes from exactly these sources. Hetzner’s presence across dozens of them makes it the default answer.

The screenshots below show what that looks like in practice — two different ChatGPT queries, the same outcome:

5) Documentation and GitHub

Hetzner turned its technical infrastructure into a persistent LLM citation asset.

- docs.hetzner.com: question → answer → code example format across all products

- Updated in sync with releases — freshness signals for RAG-based models

- GitHub org

hetzneronline: official SDKs (hcloud-go,hcloud-python), Terraform provider with thousands of dependent projects - Every developer using these tools potentially mentions Hetzner in their own repos — native brand mentions in LLM training corpora

6) PR and Award Signals

Domains listed on Trustpilot, G2, and Capterra are chosen by ChatGPT 3× more often as a trusted source. Hetzner runs consistent PR that compounds this signal.

- Gold at Server Provider Awards 2025 (May 2025)

- GPU server launch (Sep 2024) — wave of editorial coverage across tech media

- Singapore datacenter opening (Aug 2024)

- Object Storage launch (Dec 2024)

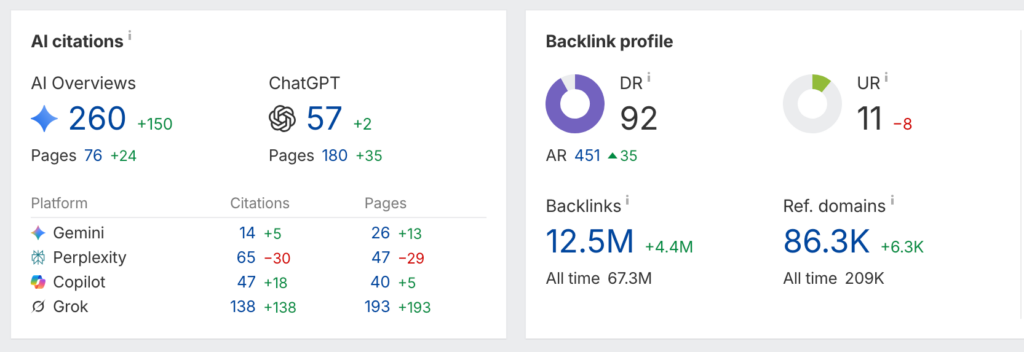

Referring Domains & AI Citations

These are the two metrics that actually explain LLM dominance — not organic click traffic.

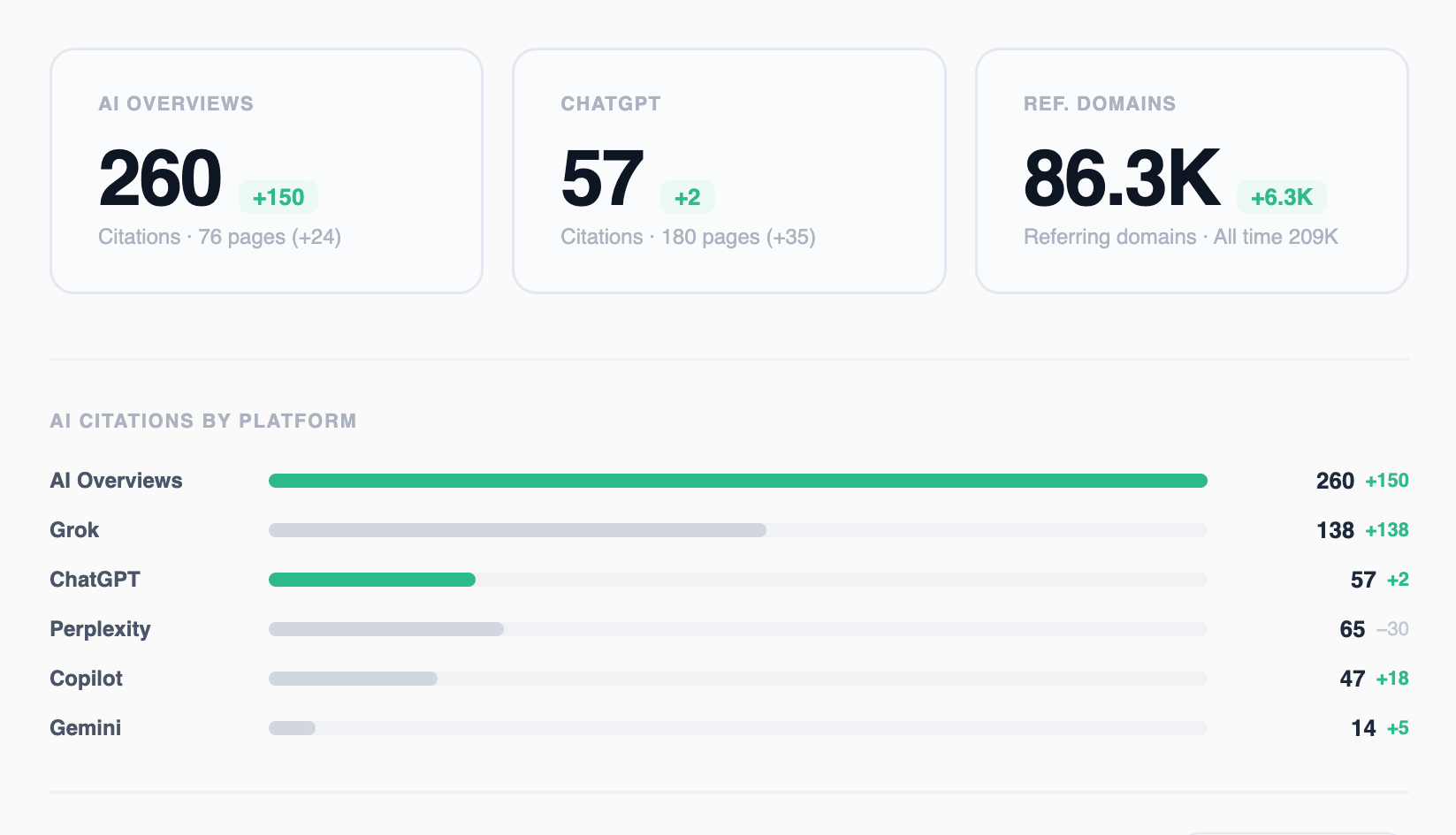

| Metric | Value | Change |

|---|---|---|

| Domain Rating | 92 | — |

| Referring domains | 86,300 | +6,300 over 6 months |

| Backlinks | 12.5M | +4.4M |

| AI Overviews citations | 260 | +150 |

| ChatGPT pages citing Hetzner | 57 | +2 |

| Grok pages citing Hetzner | 138 | +138 (new) |

| Copilot pages citing Hetzner | 47 | +18 |

| Perplexity pages citing Hetzner | 65 | — |

86,300 referring domains = 86,300 independent sources confirming Hetzner as an answer — across forums, tutorials, listicles, GitHub repos, and press. LLMs are trained on exactly this distributed consensus.

+150 AI Overview citations in 6 months is a direct, quantifiable measure of LLM visibility growth — not a proxy. The +138 Grok citations correlate precisely with the GPU server launch and AI content cluster of 2024–2025.

What Founders Can Learn From This Case

LLMs don’t rank pages — they synthesize consensus. And consensus is built outside your website: in community forums, comparison articles, developer tutorials, and press. Hetzner had all of it, built over decades. The brands that established this kind of distributed authority before the LLM era now hold a structural advantage that can’t be closed quickly.

Two patterns that apply to any brand:

1) Distributed authority beats concentrated authority. Hundreds of independent sources across different communities saying the same thing outweigh any single high-value placement. LLMs reward breadth of consensus.

2) AI citations are now a measurable KPI. The +150 AI Overview citations and +138 Grok citations in six months are trackable, comparable to competitors, and directly tied to specific content actions.

The window to build this organically is still open — but it closes as more brands start building intentionally what Hetzner built by accident.