LLM visibility measures how often, how accurately, and how favorably AI assistants describe your brand in generated responses — across platforms like ChatGPT, Perplexity, Gemini, and Google AI Overviews. It is distinct from Google SEO: a brand can rank #1 in organic search and be entirely absent from the AI answers shaping buyer consideration before any website is visited. This article explains why that happens, how LLMs select sources, and what marketers can do about it — organized by root cause, tactical layer, and measurable outcome.

Here’s a scenario that’s playing out in marketing teams right now: a brand ranks #1 on Google for its core category keyword. Domain authority of 70. Three hundred pieces of content published in the last two years. The CMO is happy. Then someone runs a test — they open ChatGPT and ask it to recommend solutions in that category. The brand isn’t there. A competitor with a fraction of the traffic shows up first, described warmly and specifically. The brand that built its entire digital presence around Google is invisible in the conversation that’s increasingly shaping what buyers believe before they ever visit a website.

This isn’t an SEO failure. It’s a different problem — and it requires a different model for thinking about visibility entirely.

AI assistants generated 527% more referred sessions year-over-year in the first five months of 2025. AI-referred traffic to retail sites grew 4,700% year-over-year by July 2025. Forty-two percent of B2B decision-makers now use an LLM in the first step of their buying process. When those buyers ask ChatGPT or Perplexity to help them build a shortlist, the brands that appear in the answer enter the consideration set before a single website is visited. The brands that don’t appear don’t exist in that buyer’s world yet — and they may never catch up.

Understanding why brands disappear from AI answers, and what actually drives their reappearance, is the most important visibility problem in marketing right now.

What Is LLM Visibility (And Why It’s Not the Same as SEO Rankings)

LLM visibility is a measure of how AI assistants describe and position your brand in their responses — not just whether you appear, but how often, in what context, with what sentiment, and alongside which competitors.

Generative Engine Optimization (GEO) is the practice of improving a brand’s LLM visibility — structuring content, building entity authority, and distributing brand presence across the web so that AI systems cite and recommend the brand accurately and consistently.

That definition sounds similar to SEO at first. It isn’t. Traditional search engines rank pages; AI systems assemble answers. Google returns a list of results for a query and lets the user evaluate them. An LLM generates a synthesized response, drawing from multiple sources, and presents a conclusion. The user gets an answer, not a list of options to choose from.

The consequence of this distinction is stark: where Google returns ten results on page one, AI answers typically cite two to seven sources. You’re either in the answer or you’re invisible — there is no page two in an AI response. Most brands, by default, are invisible.

The three moments where LLM visibility determines outcomes are category definitions (“what are the best tools for X?”), comparison queries (“how does [Brand A] compare to [Brand B]?”), and recommendation lists (“what should I use for Y?”). In all three, if your brand isn’t cited, the AI has effectively removed you from the buyer’s consideration set — before they’ve seen a single search result.

This is why traditional traffic metrics and keyword rankings no longer tell the full story. A brand can maintain its Google rankings, hold steady on organic traffic, and watch 80% of the relevant AI conversations in its category happen without it.

| Traditional SEO | LLM Visibility | |

|---|---|---|

| Goal | Rank a page in search results | Be cited in an AI-generated answer |

| Output | A ranked list of URLs | A synthesized answer with 2–7 sources |

| Primary ranking signal | Backlinks + keyword relevance | Brand entity strength + content extractability |

| Measurement | Keyword rank, organic traffic | Mention frequency, citation share of voice |

| Content format | Keyword-optimized pages | Structured, fact-dense, front-loaded content |

| Competition | Top 10 rankings | 2–7 citations per answer |

Why Your Brand Disappears — The 5 Root Causes

Most diagnostics for AI invisibility treat it as a single problem with a single fix. It isn’t. Brands disappear from AI answers for five distinct reasons — and applying the wrong fix wastes months.

Cause 1 — The Reinforcement Gap

AI systems learn which brands belong in a category by observing repeated patterns across many independent sources. If a brand rarely appears in third-party category discussions — guides, comparisons, reviews, forum threads — the model has weak evidence connecting that brand to the category, even if the brand’s own website is authoritative and comprehensive. The model simply doesn’t have enough signal to confidently place it.

This is why new entrants and challenger brands face a structurally harder problem than established players: their absence from training-era discussions isn’t fixable by publishing more content on their own site. The fix requires building web-wide brand presence across third-party sources over time.

Cause 2 — The Third-Party Signal Imbalance

Research from AirOps analysis of over 45,000 citations found that 85% of AI brand mentions originate from third-party content, not owned channels. Omniscient Digital’s analysis of 23,000+ branded LLM citations found that earned media (third-party coverage) accounts for 48% of citations; owned brand content accounts for just 23%.

Brands that invest 90% of their content budget in owned channels are inverting the ratio that AI systems actually respond to. Content on your own domain is the least influential source for LLM citation. It still matters — but as a supporting signal, not the primary one.

Cause 3 — The Infrastructure Problem

LLM crawlers — GPTBot, ClaudeBot, PerplexityBot — do not render JavaScript. Brands running JavaScript-heavy frontends may be serving AI crawlers a blank shell. The content is there for human visitors; it doesn’t exist for AI bots. This is a surprisingly common failure mode that operates entirely beneath the surface of marketing analytics.

Separately, Cloudflare changed its default settings to block AI crawlers, meaning brands using Cloudflare without reviewing their configuration may have inadvertently locked AI systems out of their content entirely — with no corresponding change in their analytics.

Cause 4 — Fragmented Brand Narrative

AI systems avoid recommending what they cannot compress into a clear, consistent answer. When a brand’s positioning, messaging, and tone differ meaningfully across its website, press coverage, and third-party mentions, the model encounters conflicting signals and responds with uncertainty — which manifests as exclusion.

This is sometimes called the “AI avoids uncertainty” effect. A brand that has repositioned, merged with another company, expanded into adjacent markets, or simply evolved its messaging without updating its broader web presence will often find that AI systems either omit it or describe it inaccurately. Consistency across owned and third-party sources is a citation prerequisite.

Cause 5 — Blocking AI Crawlers Unintentionally

Beyond Cloudflare, brands can inadvertently block AI bots through robots.txt configurations, aggressive bot-detection systems, or IP-range blocking that sweeps in legitimate AI crawlers alongside malicious bots. Many brands have never audited their robots.txt for entries that block GPTBot, PerplexityBot, or Google-Extended — and have been invisible to AI systems for months as a result.

How LLMs Actually Decide What to Cite

Every competitor article explains that brands get excluded from AI answers. Almost none explains how the selection actually works. Without understanding the mechanism, any optimization tactic is guesswork.

LLMs use two fundamentally different pathways to answer a query, and they require different strategies.

Pathway 1: Parametric Memory

This is knowledge baked into the model during training. Roughly 60% of ChatGPT queries are answered purely from parametric knowledge, without triggering a web search at all. Brands and entities that were mentioned frequently across authoritative sources prior to the model’s training cutoff have strong neural representations — they’re “instinctive” knowledge for the AI, surfaced automatically without any retrieval step.

The strategic implication is uncomfortable: if a brand wasn’t frequently discussed across authoritative sources before the last training cycle, no amount of current content production will fix its parametric absence quickly. Building parametric memory is a long-game investment in distributed brand presence across third-party sources — investments made today are building the signals for the next model’s training data, not just current retrieval.

Pathway 2: Retrieval-Augmented Generation (RAG)

For queries requiring current information, or when the model’s confidence in its parametric knowledge is low, a RAG-powered LLM generates sub-queries, retrieves live web documents, cross-references facts across sources, evaluates credibility, and synthesizes a response citing 2–7 sources. The “credibility” evaluation isn’t based primarily on domain authority — it’s based on corroboration across multiple trusted sources, entity clarity (how clearly and consistently the brand is described), content extractability (can the AI pull a clear answer from this page?), and recency.

This is why 80% of LLM citations don’t rank in Google’s top 100 for the same query (Ahrefs, August 2025). A page doesn’t need to rank highly to be cited — it needs to be structured so that an AI system can extract a clear, trustworthy answer from it efficiently.

The Non-Determinism Problem

LLMs are probabilistic engines. Ask the same question five times and get five different answers, with different cited sources, different brand mentions, and different recommendations. Only 30% of brands maintain visibility from one AI answer to the next (AirOps). There is less than a 1-in-100 chance that ChatGPT or Google’s AI, asked the same question 100 times, will return the same list of brands in any two responses (SparkToro, January 2026).

LLM visibility is a frequency metric, not a ranking position. The goal isn’t to appear once — it’s to appear consistently enough to influence buyers across multiple interactions throughout their research process.

Platform Divergence

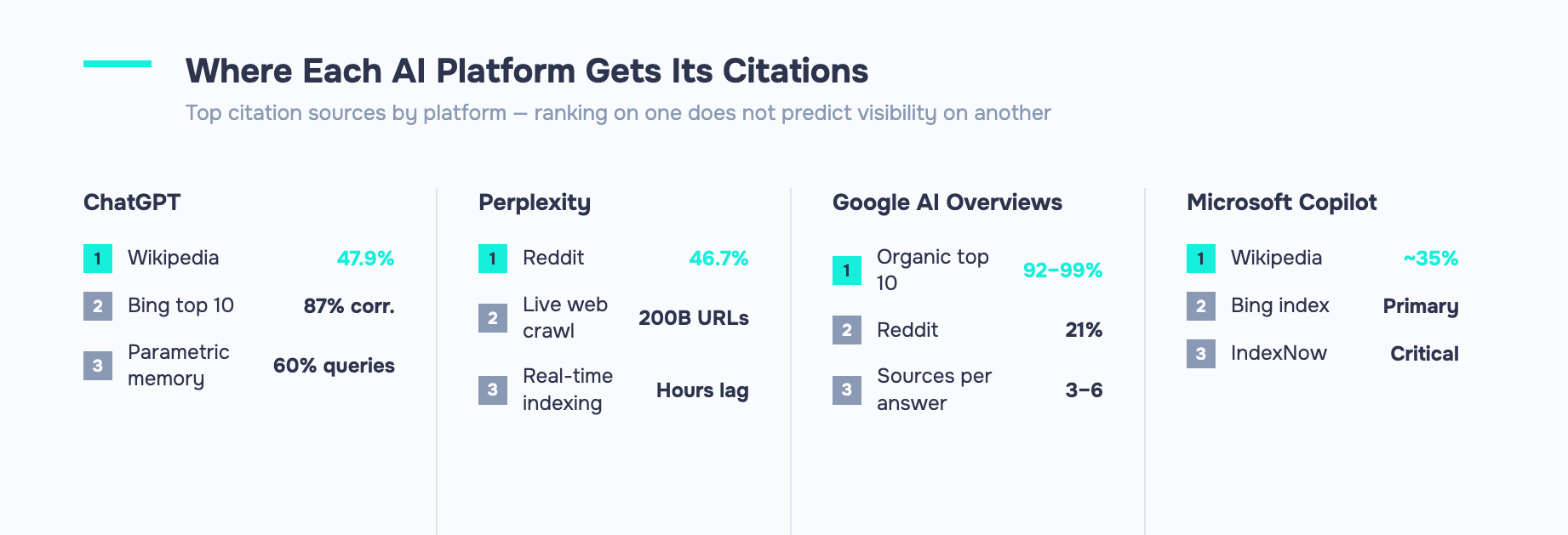

Different AI platforms use different source hierarchies, and only 11% of domains are cited by both ChatGPT and Perplexity (Digital Bloom, 2025). ChatGPT’s web citations correlate 87% with Bing’s top-10 results; Perplexity draws 46.7% of its citations from Reddit. Google AI Overviews cite pages from organic top-10 domains in 92–99% of responses, but select only the most extractable 3–6 from that pool.

A brand that tests only ChatGPT is missing the majority of the AI visibility landscape — and may be drawing entirely wrong conclusions about its overall presence.

LLM Visibility vs. Traditional SEO — What Still Works and What Doesn’t

The short answer to “Is SEO still relevant for LLM visibility?” is: yes, but it’s now necessary and insufficient rather than the primary lever.

Several traditional SEO signals transfer meaningfully to LLM visibility. Strong E-E-A-T signals (genuine expertise, real authors, authoritative sourcing) contribute to the kind of entity clarity that LLMs trust. Technical accessibility — fast page loads, clean HTML, proper crawlability — remains important because AI bots won’t index content they can’t parse. Backlinks matter, but primarily as a proxy for Common Crawl inclusion: the training corpus underlying most major AI models. If your site appears in Common Crawl (which tracks the web’s most-linked content), you’re more likely to have parametric representation.

What changes is the goal and the primary optimization target. The shift is from ranking a page to being cited in an answer. Keyword density is irrelevant — LLMs interpret meaning and context, not keyword frequency. Single-page authority matters far less than cross-platform brand reinforcement. The correlation between top Google rankings and AI-cited sources has dropped from roughly 70% to below 20% according to Brandlight’s research — strong SEO is now a weak predictor of AI visibility.

On terminology: GEO (Generative Engine Optimization), AEO (Answer Engine Optimization), LLMO, and LLM SEO all describe the same strategic goal from slightly different angles. The acronym soup doesn’t represent meaningful disciplinary differences — pick a term and don’t let the vocabulary debate distract from the actual work.

The practical framing: existing SEO budget and infrastructure is a foundation, not a solution. GEO-specific activities — entity reinforcement, earned media, content restructuring for AI extractability, review platform presence — require additional investment, roughly 20–25% incremental budget allocation according to Evergreen Media research, layered on top of sound baseline SEO.

How to Improve Your LLM Visibility — A Tactical Playbook

Improving LLM visibility is a layered problem. The tactics are different depending on which failure mode a brand has. But organized by effort and time-to-impact, the playbook breaks into four layers.

Layer 1: Technical Foundation (Days to Weeks)

Fix the infrastructure problems first — they’re the only failure mode where doing nothing literally means zero AI visibility.

Audit your robots.txt immediately. Search for any rules disallowing GPTBot, ClaudeBot, PerplexityBot, or Google-Extended. If those bots are blocked, your content doesn’t exist to the AI platforms that power those systems. Remove the blocks. If you’re using Cloudflare, check your Bot Fight Mode settings — the default configuration may be blocking AI crawlers.

For JavaScript-heavy frontends, implement server-side rendering (SSR) or pre-rendering to ensure AI crawlers receive HTML content rather than a blank shell. This is a one-time infrastructure change with permanent benefits.

Validate your schema markup. FAQ, HowTo, Organization, and Article schemas help AI systems understand and extract content from your pages. If your most important pages lack structured data, add it.

Finally, check your Bing indexing health through Bing Webmaster Tools. Because ChatGPT’s web citations correlate 87% with Bing’s top-10 results (versus 56% for Google), poor Bing indexing can directly suppress ChatGPT citation rates in ways that never show up in Google Search Console.

Layer 2: Content Structure for Extractability (Weeks to Months)

LLMs cite the content they can use, not necessarily the content that’s most comprehensive. Forty-four percent of all LLM citations come from the first 30% of a page’s text (Ahrefs, December 2025). If your key claims, original data points, and brand-outcome statements are buried in the third or fourth section of a 2,500-word article, you’re structurally disadvantaged regardless of quality.

Front-load your content. Move key claims, statistics, and conclusions to the first 200–300 words. Structure each page around a clear primary question and answer it in the opening paragraphs. Use direct Q&A sections visible in HTML — not hidden behind JavaScript accordions that AI crawlers can’t access.

Add fact density. Princeton GEO research found that adding statistics to content increases AI visibility by 22%; adding quotations boosts it by 37%. Structured content with clear headings and FAQ sections is 28–40% more likely to be cited than unstructured prose.

Integrate explicit brand language throughout your content body. One of the most common AI visibility failures is the “ghost citation” — where AI cites a brand’s content as a source while recommending a competitor in the same response. A risk and compliance software brand analyzed in Seer Interactive’s February 2026 study (541,213 LLM responses) had its content cited over 100 times in 25 days with zero brand mentions across all those citations. The fix: write content so that AI cannot extract the insight without the brand name attached. Embed brand-outcome statements — “Company X’s research found…” — throughout the piece, not just in headers or bylines.

Layer 3: Brand Entity Building (Months — High Impact)

This is the highest-ROI long-term investment and the most neglected by brands that built their presence around Google SEO.

Get your brand onto review platforms. SE Ranking research found that domains with profiles on Trustpilot, G2, Capterra, Sitejabber, and Yelp have 3x higher citation rates from ChatGPT compared to sites without such presence. These platforms carry extreme authority in AI training data because they represent authentic third-party validation. If you lack profiles on the major review platforms for your vertical, this is one of the fastest structural improvements available.

Build Reddit and Quora community presence — authentically, not promotionally. Domains with substantial branded mentions on these platforms show roughly 4x higher citation chances. Participate in category discussions where you have genuine expertise. Answer questions. Engage with community members. The AI systems that prioritize these platforms (particularly Perplexity, where Reddit accounts for 46.7% of citations) will reflect that presence.

Invest in earned media and digital PR. Third-party mentions in credible publications build both parametric memory (for future training cycles) and real-time retrieval authority (for RAG-powered platforms). Unlinked brand mentions in authoritative publications count — AI systems recognize entity mentions even without hyperlinks.

Create YouTube content. Ahrefs research identified YouTube mentions as a top citation correlator for both ChatGPT and Google AI Overviews. If you have minimal video presence, this is an underexploited high-value channel for AI visibility.

Where warranted, pursue Wikipedia presence. Wikipedia accounts for 22% of training data for major AI models and 47.9% of ChatGPT citations. A well-maintained, notable Wikipedia page is one of the most powerful parametric memory anchors available — but it must meet Wikipedia’s notability standards and be maintained for accuracy.

Layer 4: Ongoing Monitoring and Reinforcement (Continuous)

Citation decay — the phenomenon where a brand stops appearing in AI answers without any change to its content — is primarily competitive, not technical. When competitors publish more reinforcing content, they shift the citation probability calculation in their favor even if the original brand’s pages are unchanged. A brand that goes quiet for 60 days while competitors produce reinforcing content can find its AI presence significantly eroded.

Maintain a rolling content calendar targeting the queries where you have commercial value. When a competitor publishes new content targeting your citation-strong queries, respond with fresh content or updates within 30 days. Treat AI visibility like share of voice in a media category — it’s a continuous competition, not a one-time optimization project.

How to Measure LLM Visibility — Metrics, Tools, and a Simple Starting Audit

Measuring LLM visibility starts with accepting one counterintuitive truth: a single-prompt check is almost meaningless. Due to the probabilistic nature of LLMs, a brand that doesn’t appear in three queries on a Tuesday might appear in six queries on Friday with no changes to content or rankings. Meaningful measurement requires multi-sampling — running the same prompts 3–5 times each to establish a reliable baseline.

Five metrics to track:

- Mention frequency — how often your brand appears across a consistent set of category-level prompts, averaged across multiple runs

- AI share of voice — your brand mentions as a percentage of total brand mentions across category-relevant responses

- Response position — whether your brand appears first, last, or anywhere in the answer (first mentions carry more influence)

- Sentiment and framing — whether the AI describes you accurately, positively, and with the attributes you want associated with your brand

- Citation sources — which third-party URLs the AI draws from when mentioning you, and whether those sources are accurate and favorable

The 30-minute manual audit:

Open ChatGPT, Gemini, Perplexity, and Claude in separate incognito tabs. Run 10–15 category-level prompts — “best [category] tools for [use case],” “compare [your brand] to [competitor],” “what should I use for [problem your brand solves]?” Run each prompt at least twice. Document every brand that appears in each response. Score your presence: appeared in how many of the X responses? Where in the answer? Were you mentioned favorably? Which competitors consistently appeared where you were absent?

This exercise takes 30 minutes. It’s the most valuable competitive intelligence exercise available to a marketing team right now, and fewer than a quarter of companies are doing it systematically.

Tool landscape overview:

- Semrush AI Visibility Toolkit — best for teams already operating in the Semrush ecosystem; integrates with existing keyword and competitive tracking

- LLMrefs — keyword-based, affordable, statistically rigorous; good for teams starting their AI visibility measurement practice

- Profound — enterprise-grade analytics with deep citation attribution; best for organizations needing boardroom-ready reporting

- LLM Pulse — multi-platform tracking with clean interface; well-suited for mid-market teams running regular prompt audits

- Ahrefs Brand Radar — emerging AI visibility tracking integrated into a tool most SEO teams already own

What “good” looks like: top-performing brands capture 15% or more share of voice across core query sets. Enterprise leaders reach 25–30% in specialized verticals. Citation sources changing 40–60% month-over-month is normal — track trends across consistent prompt libraries, not point-in-time snapshots.

Monthly re-audits using the same prompt set allow meaningful trend analysis. Quarterly deep audits should include competitor citation mapping: who is appearing where you’re absent, on which queries, and with what content?

The Agentic AI Horizon — Why LLM Visibility Will Only Get More Important

The current state of AI-assisted discovery has a human in the loop. A buyer asks ChatGPT which CRM to consider; ChatGPT returns a list; the buyer evaluates it, visits websites, reads reviews, and makes a decision. LLM visibility determines which brands enter that consideration set. That’s already a significant competitive advantage to be in or out of.

The near future removes the human from several of those steps. Agentic AI systems — already emerging in travel booking, procurement, and software evaluation — research, compare, and in some cases directly select or purchase on behalf of users. When AI becomes the decision-maker rather than the advisor, being cited stops being the goal. Being selected is.

A16z documented this shift through the example of Canada Goose tracking whether AI models would mention the brand unprompted — not just in response to direct queries, but as a spontaneous association with winter outerwear. In an agentic world, that kind of default brand association — where the AI reaches for your brand name before a user has even specified it — is the competitive moat.

“LLM visibility stops being about getting cited and starts being about being selected.”

The compounding dynamic matters here. Every citation builds authority; every authority mention increases future citation probability. Brands moving aggressively now are establishing default status in AI parametric memory before the agentic era arrives. The citation moat compounds over time in a way that makes early investment disproportionately valuable — and late entry exponentially harder.

The brands building LLM visibility infrastructure in 2025–2026 are not optimizing for today’s chatbot. They’re positioning for an AI-driven discovery landscape that will look significantly different within two years — one where their brand is already the default answer in the model’s memory before a single user prompt is typed.

From Invisible to Inevitable — Your Next Steps in AI Search

The brands winning in AI search right now share three structural characteristics: entity clarity (they’re described consistently and accurately across the web), content extractability (their pages are structured for AI citation, not just human reading), and multi-platform presence (they don’t rely on a single platform or channel to maintain their AI visibility).

None of those characteristics develop quickly — which is why the time advantage of moving now is real. AI citation authority compounds. A brand that builds strong parametric memory and citation presence today will be increasingly difficult to displace as competitors wake up to the same need 12–18 months from now.

The starting point isn’t a complete overhaul. It’s the 30-minute audit described above. Open four AI platforms in incognito tabs. Run 15 prompts. Document the results honestly. What you find will tell you which of the five root causes is limiting your visibility — and which layer of the tactical playbook to address first.

Improving LLM visibility at scale — covering technical crawlability, content optimization, entity reinforcement, and multi-platform measurement — is precisely what a specialized AI SEO service is built to handle systematically. But the audit itself costs nothing and changes how you think about brand visibility for good.

The AI already has opinions about your brand. The question is whether you’ve given it enough reason to share them.

531