A B2B buyer is using ChatGPT to research solutions in your category right now. They’ve typed something like “what’s the best project management tool for a 200-person SaaS company scaling into enterprise?” — and ChatGPT handed them a synthesized verdict with three vendor names. Yours wasn’t one of them.

Not because your product is inferior. Not because your content is bad. Because your content wasn’t built for AI retrieval.

What follows is a practical GEO playbook for closing that visibility gap — seven sections covering why this matters for your pipeline, how ChatGPT retrieves content, which prompts to target, how to structure content for citation, how to build off-site authority, what technical setup you’re probably missing, and how to measure whether any of it is working.

TL;DR — Seven Actions That Drive ChatGPT Visibility for B2B

- Understand how ChatGPT’s two retrieval modes work — and optimize for both in parallel.

- Map the specific prompts your ICP asks ChatGPT before writing a single piece of content.

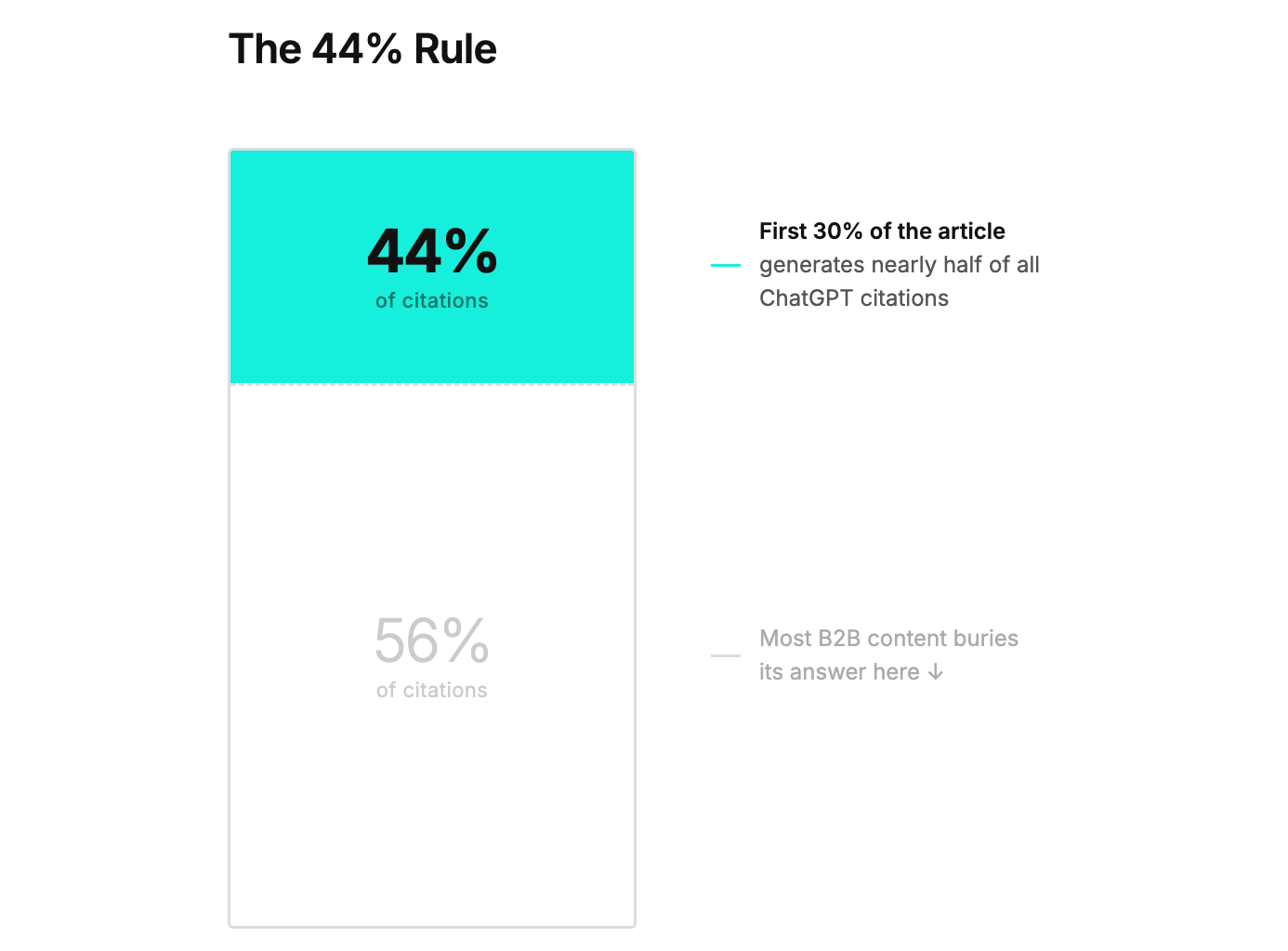

- Front-load every article and section with a direct 40–60 word answer; the first 30% of content generates 44% of citations.

- Implement Article, FAQPage, and HowTo schema markup; 61% of ChatGPT-cited pages use structured data.

- Build and maintain active profiles on G2, Capterra, Reddit, and Quora — off-site signals drive the citation floor.

- Verify your site in Bing Webmaster Tools, submit sitemaps, and audit your robots.txt for GPTBot blocks.

- Track AI Share of Voice monthly using 20–30 ICP prompts; without measurement, optimization is directionless.

What Is Generative Engine Optimization (GEO)?

Generative Engine Optimization (GEO) is the practice of structuring and optimizing content so that AI-powered platforms — ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude — surface it as a cited source when generating responses to user queries.

Where traditional SEO optimizes for ranking positions in a list of blue links, GEO optimizes for citation within a synthesized answer. The difference matters: a page can rank #1 on Google and never appear in a ChatGPT response. Only 12% of URLs cited by ChatGPT rank in Google’s top 10 for the original query (Ahrefs, August 2025). The two systems evaluate content through related but meaningfully distinct lenses.

Answer Engine Optimization (AEO) is a related term you’ll encounter, often used interchangeably with GEO. The practical difference is narrow: AEO tends to focus on zero-click answer capture (featured snippets, voice search, PAA boxes), while GEO specifically addresses optimization for generative AI platforms that synthesize multi-source responses. For B2B marketers, GEO is the more useful frame — it encompasses the full stack of content structure, off-site authority, technical accessibility, and measurement that determines whether ChatGPT recommends your brand.

This article is a GEO playbook built specifically for B2B. The strategies apply across ChatGPT, Perplexity, and Google AI Overviews — with platform-specific nuances noted where relevant.

Why ChatGPT Is Now a B2B Discovery Channel (And Why Traditional SEO Won’t Get You There)

ChatGPT reached 800–900 million weekly active users by late 2025, with 5.8 billion monthly visits and 2 billion daily prompts worldwide. As a discovery platform, it has moved well past novelty — and B2B buyers are disproportionately driving that usage.

80% of global B2B tech buyers use it as much as traditional search when researching vendors. Among 25–34-year-old buyers — the demographic now running most technology evaluations — 85% use AI for supplier research. These aren’t marginal numbers. They represent the primary research behavior of the people your sales team is trying to reach.

The pipeline implication is specific and measurable. AI-referred visitors are 4.4× more qualified than traditional organic visitors. Brands that appear in AI-generated answers see a 38% lift in clicks and a 39% boost in paid ad performance. And the conversion advantage compounds: by the time a buyer contacts a vendor, 95% of winning vendor selections have already been made on the Day One shortlist. ChatGPT is where that shortlist gets formed.

The failure mode of traditional SEO content in this context is worth naming precisely. B2B websites are full of sentences like: “Our platform empowers teams to drive transformational outcomes through innovative workflows.” ChatGPT cannot do anything with that sentence. It can’t extract a category, a use case, a differentiator, or a recommendation. When a buyer asks “what’s the best tool for automating B2B sales workflows,” ChatGPT ignores your content and cites a competitor whose page opens with: “Outreach is a sales execution platform that automates follow-up sequences for B2B revenue teams with 50–500 reps.”

The difference isn’t content volume. It’s content legibility to AI.

There’s a second structural problem: Google rankings don’t predict ChatGPT visibility. The two platforms use fundamentally different retrieval architectures. B2B teams running a Google-only SEO strategy have an 88% blind spot in AI search coverage — and they usually don’t know it because fewer than 1% of ChatGPT-referred sessions appear in standard analytics as anything other than “direct” traffic.

Key takeaway: 94% of B2B buyers use LLMs during their purchasing journey, AI-referred visitors convert at 4.4× the rate of organic visitors, and 95% of winning vendors are on the Day One shortlist before first contact — the shortlist that ChatGPT is now helping form.

How ChatGPT Actually Decides What to Recommend

Most B2B marketers treat ChatGPT as a black box that occasionally mentions brands. Understanding its two retrieval modes turns that black box into something you can systematically influence.

Mode 1: Parametric (Training Data)

ChatGPT’s base model was trained on a large-scale snapshot of the web. Everything it “knows” without doing a live search comes from that training — which means brands mentioned frequently and consistently across authoritative sources during the training window are baked into ChatGPT’s baseline recommendations. This is why a company with strong Wikipedia coverage, consistent G2 and Capterra profiles, analyst mentions, and a presence in industry publications tends to get recommended even in conversations where no live search occurs. The model has absorbed the web’s consensus about who the credible players in a category are.

For B2B brands, this means off-site brand presence isn’t just a “nice to have” — it directly determines what ChatGPT defaults to before it even queries the web.

Mode 2: Search/RAG (Bing-Based Live Retrieval)

When a prompt signals commercial intent — a buyer comparison, a vendor recommendation request, a product category question — ChatGPT triggers a live web search through Bing and synthesizes fresh results into its response. Analysis of 500+ verified ChatGPT citations found that 87%+ match Bing’s top organic results when web browsing is enabled (Seer Interactive, 2025). Google, by comparison, matches only 56% of ChatGPT citations.

This finding has a stark practical implication: Bing indexing is non-optional for B2B brands that want to appear in the commercial-intent responses their buyers are generating. Most B2B marketing teams have never logged into Bing Webmaster Tools. That’s the gap.

Both modes run in parallel. A strategy that only optimizes for training data (off-site brand building) will have a ceiling once buyers start querying with commercial intent. A strategy that only optimizes for Bing search (fresh, indexed content) won’t deliver consistent recommendations in parametric-mode responses. The compounding advantage goes to brands that do both.

Key takeaway: ChatGPT operates in two distinct modes — parametric (training data) and live search via Bing. Commercial-intent queries, the ones your buyers use most, are more likely to trigger live search mode. Both modes require separate optimization, and Bing is the primary index that matters for real-time retrieval.

Map Your ICP’s ChatGPT Prompts Before You Write a Word

Before optimizing any content, you need to know which specific prompts your buyers are actually typing. This step is almost entirely absent from most ChatGPT ranking guides — and skipping it means optimizing for the wrong queries.

B2B ChatGPT queries look nothing like B2B Google keywords. On Google, a buyer might search “CRM software.” In ChatGPT, that same buyer asks: “What’s the best CRM for a 50-person SaaS company scaling into enterprise, if we need deep HubSpot integration and our sales cycle is 90+ days?” These are longer, scenario-specific, and packed with context. The content that answers them needs to mirror that specificity.

To build your ICP prompt library, focus on three query categories:

Comparison queries: “Best [category] for [use case]” — e.g., “Best FP&A software for mid-market manufacturing companies”

Problem-solution queries: “How to [solve problem] without [constraint]” — e.g., “How to automate customer onboarding without hiring a dedicated CS team”

Buying intent queries: “Which [tool] should I choose for [specific situation]” — e.g., “Should we use Salesforce or HubSpot if we’re 80 people and planning Series B in 18 months?”

The fastest way to populate this list is operational, not technical. Interview your sales reps about what prospects say they researched before the first call. Ask your CS team what questions new customers had during evaluation. Use ChatGPT itself to expand a seed list — paste in three prompts you’ve identified and ask for 20 variations. AnswerThePublic surfaces the longer-tail conversational variants that often match AI search behavior closely.

Aim for a library of 20–30 high-priority prompts organized by buyer stage — awareness-phase prompts (category education), evaluation-phase prompts (comparison, feature questions), and decision-phase prompts (implementation, integration, pricing questions). This prompt library becomes your content roadmap and your monthly measurement framework.

Key takeaway: B2B ChatGPT queries are longer, scenario-specific, and structurally different from Google keywords. Build a library of 20–30 ICP prompts organized by buyer stage before creating or restructuring any content — this becomes both your content roadmap and your measurement framework.

Structure Your Content So ChatGPT Can Extract and Cite It

This is the highest-leverage on-page optimization in ChatGPT ranking — and most B2B content gets it exactly backwards.

Answer first, always. Traditional B2B content is structured for progressive disclosure — building context, then making the point. AI retrieval inverts this. If your article’s actual answer is buried in paragraph seven after three paragraphs of setup, ChatGPT won’t cite it. Lead every page and every major section with a direct 40–60 word answer to the primary question. No preamble. No “great question, let’s explore.”

Write for extraction, not for readers. Keep paragraphs short: 2–4 sentences. Dense prose blocks degrade AI extraction quality. Each paragraph should carry one idea, stated plainly. SE Ranking’s analysis found that sections of 120–180 words between headings averaged 4.6 citations — 70% more than pages with very short sections under 50 words.

On headings — one counterintuitive finding: SE Ranking’s analysis of 129,000 domains found that question-style headings (H2s formatted as “What is X?” or “How do you Y?”) actually underperformed topic-label headings. Pages with topic headings averaged 4.3 citations versus 3.4 for question-format headings. This contradicts years of voice search optimization advice. Use clear topic labels — “Content Freshness and Citation Frequency” outperforms “How Often Should You Update Your Content?”

Content freshness matters mechanically. Pages updated within the last 30 days receive 3.2× more citations than content older than 90 days. Substantive updates — new data, revised examples, expanded sections — earn 3.8× more citations than cosmetic refreshes (a date change and minor copy edits). Build a real refresh cadence into your editorial calendar.

Schema markup is table stakes. 61% of pages cited by ChatGPT use schema markup. Only 12.4% of websites implement any structured data at all. The gap between “doing schema” and “not doing schema” is one of the most reliable citation advantages available, and it’s still mostly uncaptured. FAQPage schema delivers a 28% citation lift in AI results. HowTo schema: 24%. Start with Article schema for all blog posts, FAQPage on any page with Q&A blocks, and Organization schema on your homepage.

Before/After Example:

❌ “Our platform helps growing teams unlock their full potential through streamlined collaboration and seamless integrations that drive real business outcomes.”

✅ “Notion is a project management and wiki tool used by B2B SaaS teams to centralize documentation, track projects, and manage OKRs — typically replacing a combination of Confluence, Asana, and Google Docs.”

The second version is extractable. ChatGPT can cite it. The first version is invisible.

One final technical note: ChatGPT retrieves content through Bing’s crawler, which does not render JavaScript. If your product descriptions, feature lists, or key differentiators only load after JavaScript execution, they’re invisible to ChatGPT — regardless of how well-written they are. This issue is extremely common on B2B SaaS sites built on modern JavaScript frameworks.

What you need to know: 44.2% of ChatGPT citations come from the first 30% of content. Lead with a direct answer in every section, use topic-label headings (not question format), implement schema markup, and publish substantive content updates on a quarterly schedule.

Build Off-Site Authority — The Signal ChatGPT Trusts Most

Your website is one voice. ChatGPT learns your brand’s credibility from the rest of the web’s opinion of you. For parametric-mode recommendations especially, off-site brand presence is the primary trust signal — and the specific channels that matter for B2B are distinct from general SEO link-building.

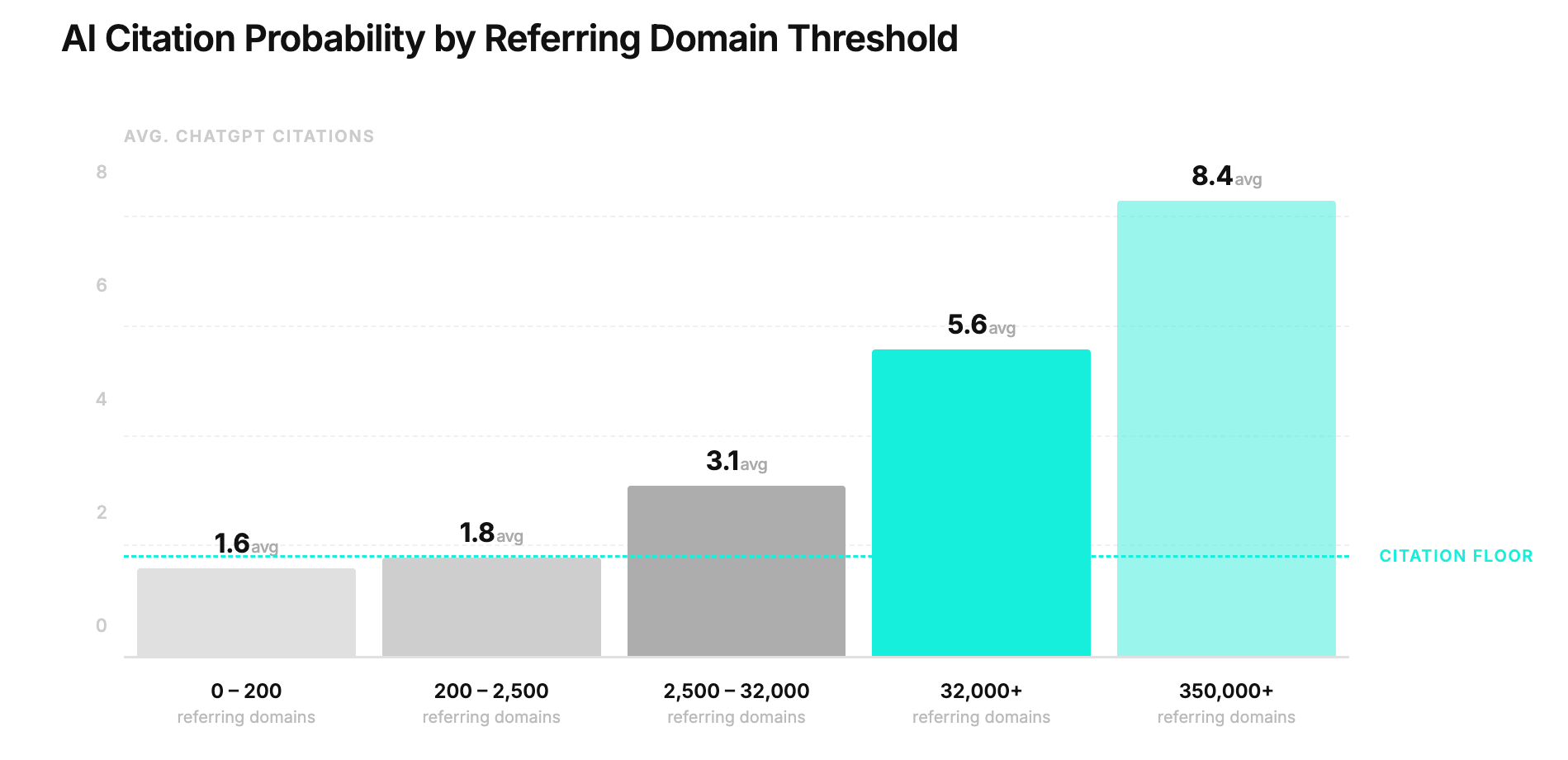

BrightEdge research found that ChatGPT generates 3.2× more brand mentions than direct citations. The implication: being talked about across trusted sources does more for your AI visibility than any single piece of optimized content. Entity presence on 4+ third-party platforms increases citation likelihood by 2.8×.

The B2B-specific off-site channel stack:

Review platforms — G2 and Capterra are not optional for B2B SaaS. G2 has accumulated 100,000+ AI citations and appears in the top five vendor recommendations for HR tech, sales and marketing tech, and digital workplace queries. Capterra and Trustpilot provide the same signal. Active review generation on these platforms doesn’t just help buyers — it feeds ChatGPT’s training and retrieval directly. Review site presence provides a 3× citation advantage.

Community presence — Reddit and Quora participation drives measurable citation impact in ways that surprise most B2B marketers. SE Ranking’s analysis found that domains with significant Reddit presence (10M+ mentions) averaged 7.0 citations versus 1.8 for minimal activity. Quora showed the same pattern. You don’t need millions of mentions — but a consistent, authentic brand presence in relevant subreddits and Quora topics establishes the community validation signals that ChatGPT’s training data rewards.

Industry analyst coverage — A mention in a Gartner Magic Quadrant, Forrester Wave, or G2 Grid report is among the highest-authority brand signals available. If analyst coverage isn’t realistic at your stage, prioritize guest contributions to recognized industry publications where your ICP actually reads.

Digital PR and original research — One of the fastest ways to earn authoritative mentions at scale is to create data worth citing. A study, benchmark report, or industry survey that gets picked up by tech publications generates the kind of cross-domain brand mentions that feed both ChatGPT’s training data and its live retrieval.

Brand consistency as entity infrastructure — ChatGPT resolves brands as entities, not just strings. If your brand name, category label, and core positioning description vary across your website, LinkedIn, G2, Wikipedia, and directories, AI systems struggle to consolidate these mentions into a coherent entity with clear authority. Audit your brand language across every platform and standardize it. The category you claim should be identical everywhere: if you’re a “revenue intelligence platform” on your website, you shouldn’t appear as “sales analytics software” on G2 and “B2B data platform” on Crunchbase. Before doing that audit manually, AI Visibility Checker is a quick way to see how AI crawlers currently read your site — it flags schema gaps and bot access issues that often reveal exactly where entity signals are breaking down.

A note on platform differences: ChatGPT’s citation behavior favors Wikipedia and established .com authority domains; Perplexity favors Reddit and forum content more heavily. A multi-platform off-site strategy addresses both simultaneously.

Key takeaway: Entity presence on 4+ third-party platforms increases ChatGPT citation likelihood by 2.8×. Prioritize G2, Capterra, Reddit, and Quora for B2B — and standardize your brand name, category label, and positioning language identically across every platform.

Technical Setup — What B2B Marketers Miss Before Optimizing

Technical accessibility is a prerequisite. No amount of content structure optimization helps if ChatGPT can’t crawl and index your pages. Most B2B teams that have invested in Google SEO have never touched the infrastructure that ChatGPT actually uses.

1. Bing Webmaster Tools — do this today. If you’re not in Bing, you’re not in ChatGPT’s search mode. Verify your site in Bing Webmaster Tools, submit your XML sitemap, and monitor crawl coverage. Bing weights content freshness and depth somewhat differently than Google — it’s worth a separate 30-minute audit of your top product and category pages against Bing-specific ranking factors.

2. Check your robots.txt for GPTBot. OpenAI’s crawler is called GPTBot. Many B2B sites inadvertently block it with broad “disallow all AI bots” directives added during the initial wave of AI bot blocking. Review your robots.txt file explicitly — User-agent: GPTBot should not be followed by Disallow: /. Similarly, check that PerplexityBot is not blocked if Perplexity visibility matters to you.

3. Implement llms.txt. Modeled on robots.txt, llms.txt is an emerging standard that lets you guide LLMs to your most important pages with brief, human-readable descriptions. Place a /llms.txt file in your root directory with a short description of your company, followed by a structured list of your most important URLs with one-line descriptions of what each page covers. Early adoption creates a crawl architecture advantage as LLMs increasingly rely on this file for site orientation.

4. JavaScript audit. Identify which content on your product pages, landing pages, and blog posts only loads after JavaScript execution. Core content — value propositions, feature descriptions, comparison points — should be server-rendered in clean HTML. This applies particularly to pages that use dynamic content loading, tab-based layouts, or single-page app frameworks.

5. Page health and mobile optimization. Bing’s crawl algorithm rewards technically healthy sites. Core Web Vitals, mobile responsiveness, and crawl efficiency all feed into the quality signals that influence ChatGPT’s search-mode retrieval. One interesting counterintuitive finding from SE Ranking’s data: pages with the absolute fastest load times (INP under 0.4s) averaged fewer citations than moderate-speed pages — likely because ultra-thin pages signal low content depth. The goal is technically healthy, not technically stripped.

The real insight: If Bing can’t crawl your site, ChatGPT’s live search mode can’t cite you. Verify your site in Bing Webmaster Tools, check that GPTBot isn’t blocked in robots.txt, and audit key pages for JavaScript-gated content — these are the silent killers that undermine every other optimization effort.

Measure What Matters — Tracking ChatGPT Visibility as a B2B KPI

Most B2B teams implementing GEO optimization face the same problem six months in: they have no data showing whether anything worked. Standard analytics platforms are nearly blind to AI traffic — ChatGPT-referred sessions typically appear as direct traffic in GA4. Rank trackers built for Google don’t monitor AI responses. Without a measurement system, optimization is guesswork.

The 5 KPIs for ChatGPT Visibility:

1. Citation Frequency — How often does your brand appear in responses to your target prompt set? Run your 20–30 ICP prompts monthly and log whether your brand appears. This is your core metric.

2. Mention Position — First mention in an AI response carries significantly more weight than fifth. Log not just whether you appear, but where. A brand consistently mentioned third is in a meaningfully different position from one mentioned first.

3. AI Share of Voice — Your citation rate versus competitors across the same prompt set. If you appear in 8 of 30 prompts and your top competitor appears in 14, you have a 27% AI Share of Voice against their 47%. Track this gap over time.

4. Sentiment Quality — What is ChatGPT actually saying about your brand when it mentions you? Extracting the specific language — which use cases, which buyer profiles, which strengths — tells you whether ChatGPT’s representation of your brand matches your positioning. Misalignment here is often fixable with targeted content.

5. Cross-Platform Consistency — Do you appear across ChatGPT, Perplexity, and Gemini, or only one? Broad AI visibility requires platform-specific optimization, and measuring coverage gaps tells you where to focus.

Tooling options:

Dedicated AI visibility trackers — Otterly.AI, AIclicks, Profound — automate the prompt-running and logging process at scale. For teams earlier in their GEO journey, manual prompt sampling works: run your 20–30 prompts in ChatGPT monthly, log results in a spreadsheet, and score against your 5 KPIs.

Building a monthly visibility audit:

Define your prompt set → run each prompt in ChatGPT and Perplexity → log mention (yes/no), position (1st, 2nd, etc.), sentiment language, and competitors mentioned → calculate your AI Share of Voice score → compare month-over-month → identify which content updates or off-site efforts moved the needle.

Connecting AI visibility to pipeline:

To capture AI-referred sessions that don’t appear as organic, use UTM parameters on any inbound links from AI-referenced pages and set up a GA4 custom segment filtering for sessions where the source matches chatgpt.com, perplexity.ai, and bing.com with AI-specific referral patterns. Monitor session duration and conversion rates for this segment separately — the 4.4× quality advantage of AI-referred traffic often shows up first in engagement metrics.

What this means in practice: Standard analytics are nearly blind to AI-referred traffic. Build a monthly prompt-testing protocol of 20–30 ICP queries, track AI Share of Voice against competitors, and measure citation frequency, mention position, and sentiment quality as primary KPIs.

From AI Invisible to AI-Recommended

The mechanics here aren’t mysterious. ChatGPT recommends brands that are clearly defined (answer-first content), technically accessible (Bing-indexed, schema-marked, JavaScript-free), validated by the ecosystem (review platforms, community presence, brand mentions), and consistently present across multiple authoritative surfaces.

What’s counterintuitive is the flywheel: structured content earns citations → citations generate brand mentions across the web → those mentions feed ChatGPT’s training data → training data produces more organic recommendations without live search. Each layer compounds the next.

The urgency is real and mathematical. The brands building AI visibility now are capturing a first-mover advantage that will be substantially harder to close in 18 months. The buyers conducting research sessions in ChatGPT today are forming preferences about vendors they’ll contact in Q3 or Q4. Waiting is not a neutral choice — it just means your competitors’ category content becomes the default source ChatGPT uses to define your industry.

The work covers seven dimensions: understanding the two retrieval modes, mapping the prompts your ICP actually uses, restructuring content for extraction, building off-site entity authority, fixing technical accessibility, and measuring AI Share of Voice over time. None of it is beyond what a focused B2B marketing team can execute. It’s the combination — done systematically, measured rigorously — that separates the brands appearing in AI answers from the ones being recommended against.